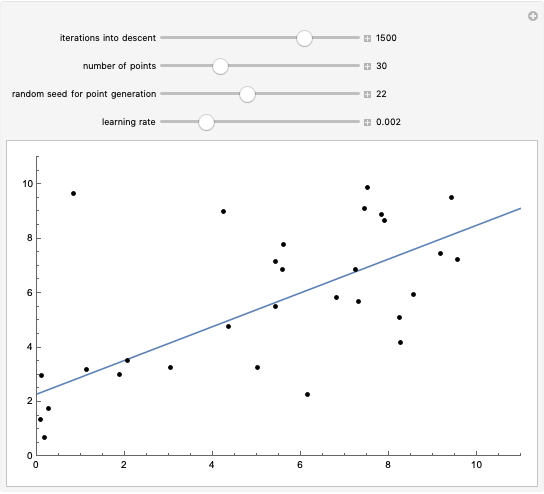

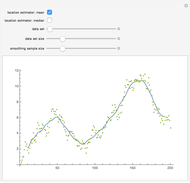

Linear and Quadratic Curve Fitting Practice

Initializing live version

Requires a Wolfram Notebook System

Interact on desktop, mobile and cloud with the free Wolfram Player or other Wolfram Language products.

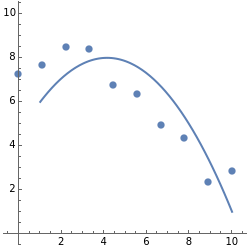

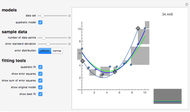

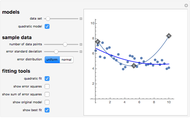

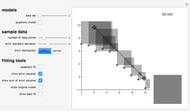

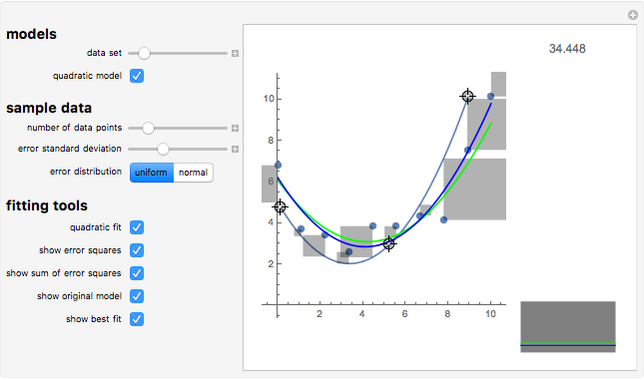

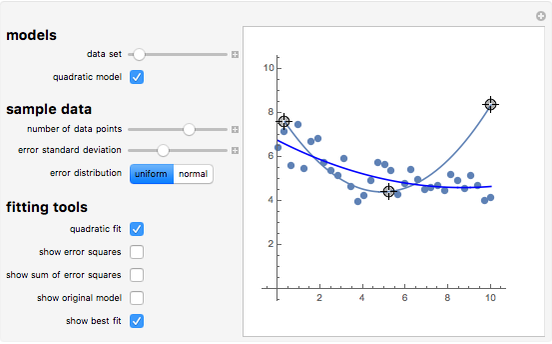

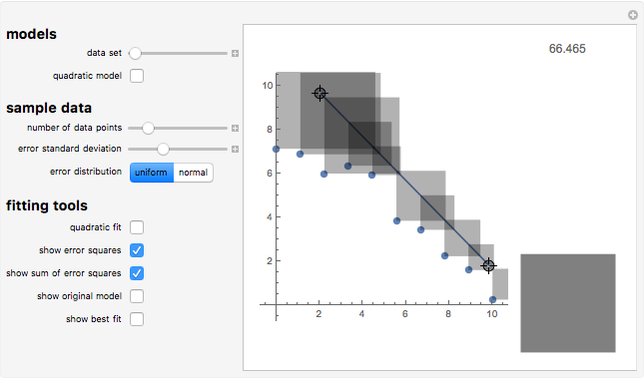

Practice fitting lines and curves to sample datasets, then compare your fit to the best possible.

[more]

Contributed by: Jon McLoone (March 2011)

Open content licensed under CC BY-NC-SA

Snapshots

Details

detailSectionParagraphPermanent Citation

"Linear and Quadratic Curve Fitting Practice"

http://demonstrations.wolfram.com/LinearAndQuadraticCurveFittingPractice/

Wolfram Demonstrations Project

Published: March 7 2011