Markov Chain Monte Carlo Simulation Using the Metropolis Algorithm

Requires a Wolfram Notebook System

Interact on desktop, mobile and cloud with the free Wolfram Player or other Wolfram Language products.

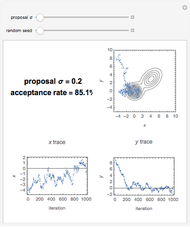

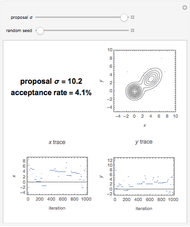

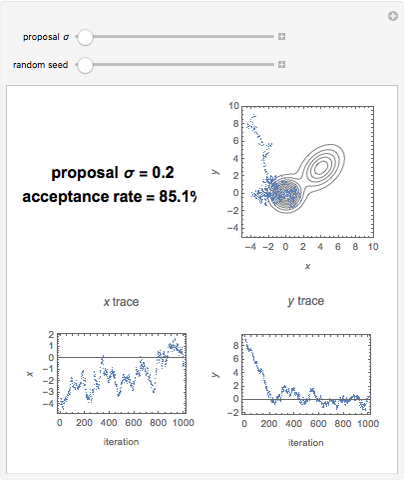

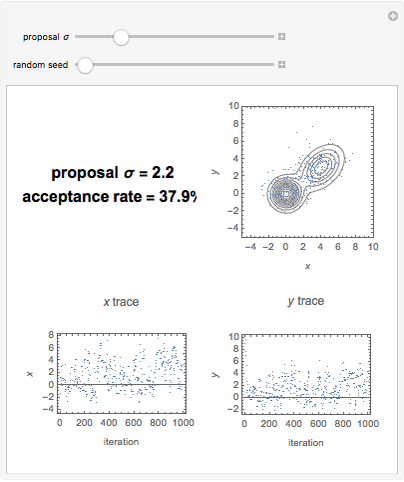

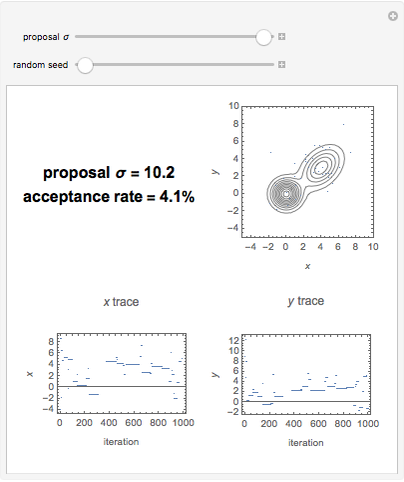

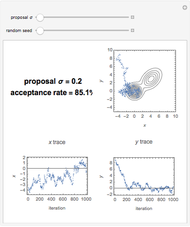

This Demonstration allows a simple exploration of the Metropolis algorithm sampling of a two-dimensional target probability distribution as a function of the width (sigma) of a circularly symmetric Gaussian proposal distribution. If the sigma is too small almost all proposals are accepted, the samples are highly correlated, and the burn-in period is long. If the proposal sigma is too large, hardly any proposals are accepted, and the sampling sticks. When a proposal is not accepted the new sample is just a repeat of the previous sample. Somewhere in between, the samples are minimally correlated and efficiently concentrate in regions where the target probability distribution is significant.

[more]

Contributed by: Philip Gregory (Physics and Astronomy, University of British Columbia) (March 2011)

Open content licensed under CC BY-NC-SA

Snapshots

Details

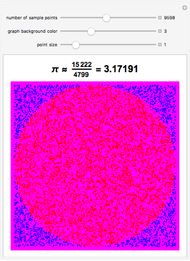

Monte Carlo methods provide approximate solutions to a great variety of problems in science and economics by performing statistical sampling experiments on a computer. The method applies to problems with no probabilistic content as well as to those with inherent probabilistic structure. Among all Monte Carlo methods, Markov chain Monte Carlo (MCMC) provides the greatest scope for dealing with very complicated systems. MCMC was first introduced in the early 1950s by statistical physicists (N. Metropolis, A. Rosenbluth, M. Rosenbluth, A. Teller, and E. Teller) as a method for the simulation of simple fluids. In the 1990s, the method began to play an important role in the arena of bioinformatics (the science of developing computer databases and algorithms to facilitate and expedite biological research, particularly in genomics). The current renaissance in Bayesian statistics stems in large part on the use of MCMC methods to evaluate integrals in many dimensions. The earliest and still widely used MCMC method is called the Metropolis algorithm. In this Demonstration the Metropolis algorithm is used draw samples from a two-dimensional probability distribution in parameters, which consists of two elliptical Gaussians. Of course, the real value of the algorithm is in dealing with much higherâ€dimensional problems. The amazing property of the Metropolis algorithm is that after an initial burn-in period (which is discarded) the algorithm generates an equilibrium distribution of samples with a density proportional to the underlying probability distribution. It concentrates samples to regions with significant probability. These samples can then be used to obtain approximate integrals that are needed in many statistical problems.