Union Bound Probability in Error-Correction Coding

Requires a Wolfram Notebook System

Interact on desktop, mobile and cloud with the free Wolfram Player or other Wolfram Language products.

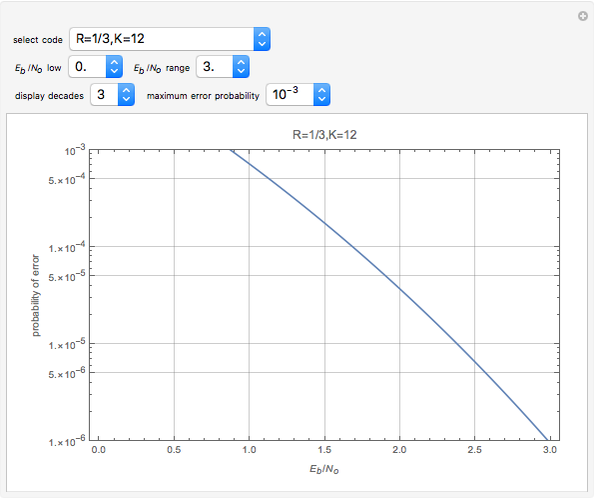

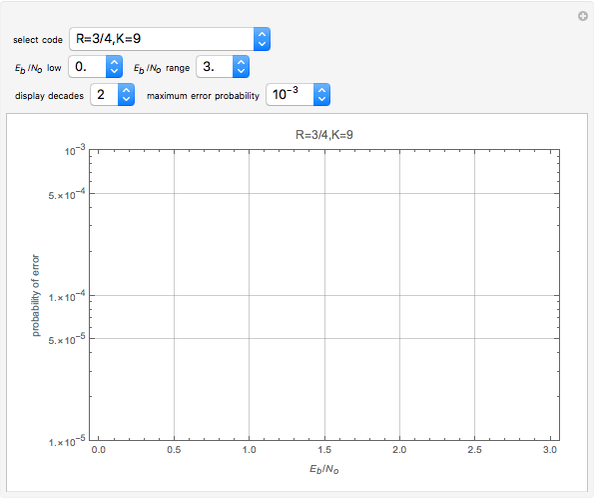

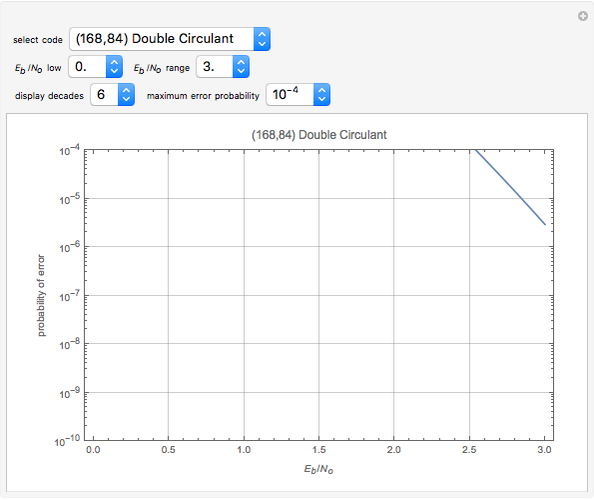

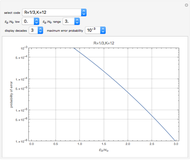

This Demonstration calculates the union bound probability of bit error for a digital communication system that uses error correction coding over a power-limited Gaussian noise channel. Use the "select code" drop-down menu to choose the error correction code to be evaluated. These codes are "good," if not optimal or near optimal, for the code parameters chosen. The probability of bit error is plotted versus the received  in dB (the

in dB (the  term is related to the received signal-to-noise ratio). The performance curve may be plotted over broad or narrow ranges of

term is related to the received signal-to-noise ratio). The performance curve may be plotted over broad or narrow ranges of  and probability of bit error using the other drop-down menus. This lets you zoom in or out of specific portions of the performance curve and allows accurate characterization of performance in any error-rate region of interest.

and probability of bit error using the other drop-down menus. This lets you zoom in or out of specific portions of the performance curve and allows accurate characterization of performance in any error-rate region of interest.

Contributed by: Bibb Cain and Mike Luntz (December 2017)

Open content licensed under CC BY-NC-SA

Snapshots

Details

An error-correcting code is an approach for encoding information bits to produce transmitted channel bits that are related to the information bits by a set of linear constraints. These linear constraints and the fact that the number of transmitted bits is larger than the number of information bits allows a decoding algorithm to mathematically identify incorrect received channel bits, thereby allowing correction of channel errors. This can be done very effectively if the channel error rate is not too high (i.e. the channel signal-to-noise ratio is large enough), the selected code is "good," and the decoding algorithm is "good enough." The uncoded case is included for comparison. The uncoded system transmits the information bits directly with no linear constraints between bits, and the number of transmitted bits is equal to the number of information bits. For the calculations in this Demonstration, this case assumes that the channel modulator and demodulator are performing optimal transmission and reception of binary phase shift keying (BPSK) or quadrature phase shift keying (QPSK) [1].

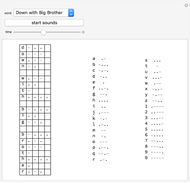

This Demonstration has a large embedded database of block and convolutional codes. The convolutional codes are indicated in the "select code" drop-down menu with the notation " " (this is a code that was widely used in the past), where the parameter

" (this is a code that was widely used in the past), where the parameter  indicates the code rate. The rate

indicates the code rate. The rate  is the ratio of the number of information bits to total bits transmitted over the channel. The code database has example codes with a variety of code rates. In the "

is the ratio of the number of information bits to total bits transmitted over the channel. The code database has example codes with a variety of code rates. In the " " example, the transmitted data rate is twice the information rate. The parameter

" example, the transmitted data rate is twice the information rate. The parameter  is the code constraint length, which is the range over which a given information bit is influencing the transmitted channel bits. Code error-correcting capability improves with increasing

is the code constraint length, which is the range over which a given information bit is influencing the transmitted channel bits. Code error-correcting capability improves with increasing  (increasing the encoding and decoding algorithm complexity with more constraints among the information and channel bits). The convolutional codes provided in the "select code" drop-down menu are optimal or near optimal. The union bound performance results calculated assume an optimal decoding algorithm using an infinite number of levels of quantization of the demodulator output value. The Viterbi algorithm is a well-known practical and optimal decoding algorithm that can achieve this level of performance within implementation constraints [2]. Since the decoding algorithm complexity increases exponentially with

(increasing the encoding and decoding algorithm complexity with more constraints among the information and channel bits). The convolutional codes provided in the "select code" drop-down menu are optimal or near optimal. The union bound performance results calculated assume an optimal decoding algorithm using an infinite number of levels of quantization of the demodulator output value. The Viterbi algorithm is a well-known practical and optimal decoding algorithm that can achieve this level of performance within implementation constraints [2]. Since the decoding algorithm complexity increases exponentially with  , this level of performance can be achieved only for relatively short codes. A

, this level of performance can be achieved only for relatively short codes. A  decoder was constructed several years ago by NASA for a deep-space application, so Viterbi decoders for values of

decoder was constructed several years ago by NASA for a deep-space application, so Viterbi decoders for values of  in this vicinity are feasible. Code weight structures that are unique to each code are needed in order to calculate the union bound. These relevant weight structures are embedded in the database of this Demonstration and are used to evaluate the union bound code performance.

in this vicinity are feasible. Code weight structures that are unique to each code are needed in order to calculate the union bound. These relevant weight structures are embedded in the database of this Demonstration and are used to evaluate the union bound code performance.

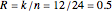

Block codes are denoted by the convention  with the code name as in the example "

with the code name as in the example " " code. The first parameter

" code. The first parameter  is the block length or the number of transmitted bits per code block. In this example, the 24 bits in the block are a linear combination of the 12 information bits in the block that is the second parameter

is the block length or the number of transmitted bits per code block. In this example, the 24 bits in the block are a linear combination of the 12 information bits in the block that is the second parameter  . With 12 information bits per 24 transmitted bits, this code has a code rate of

. With 12 information bits per 24 transmitted bits, this code has a code rate of  . No information bits in a given block influence the transmitted bits in any other block. Implementing an optimal decoding algorithm for shorter codes and for very high rate and very low rate longer block codes is quite feasible, but implementing an optimal decoding algorithm for moderate rate longer codes is more difficult except in special cases. In some cases, an iterative decoding algorithm may be employed [3]. Very long block codes such as LDPC codes employing iterative methods are not included in this Demonstration, since their performance may not be evaluated accurately by the union bound method.

. No information bits in a given block influence the transmitted bits in any other block. Implementing an optimal decoding algorithm for shorter codes and for very high rate and very low rate longer block codes is quite feasible, but implementing an optimal decoding algorithm for moderate rate longer codes is more difficult except in special cases. In some cases, an iterative decoding algorithm may be employed [3]. Very long block codes such as LDPC codes employing iterative methods are not included in this Demonstration, since their performance may not be evaluated accurately by the union bound method.

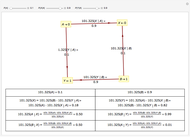

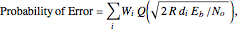

The union bound calculation of probability of bit error for BPSK or QPSK over a power-limited Gaussian channel uses the following equation [3]:

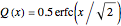

where the  function is related to the complementary error function

function is related to the complementary error function  by

by

.

.

The terms  and

and  comprise the code weight structure that is unique for each code. The

comprise the code weight structure that is unique for each code. The  term is the Hamming weight of a nonzero code word and the

term is the Hamming weight of a nonzero code word and the  term is the weight multiplier that is related to the number of nonzero code words with Hamming weight

term is the weight multiplier that is related to the number of nonzero code words with Hamming weight  . The terms of smallest Hamming weight are the most significant in terms of an accurate calculation of error rate. Typically for the calculations in this Demonstration, the five smallest Hamming weight terms are used. The nature of the union bound calculation is that the union bound is least accurate at higher values of probability (such as typically above

. The terms of smallest Hamming weight are the most significant in terms of an accurate calculation of error rate. Typically for the calculations in this Demonstration, the five smallest Hamming weight terms are used. The nature of the union bound calculation is that the union bound is least accurate at higher values of probability (such as typically above  ). The union bound is most accurate at high signal-to-noise ratios and consequently at very low error rates (such as

). The union bound is most accurate at high signal-to-noise ratios and consequently at very low error rates (such as  and lower). Note that in recent times, the error rates of interest in applications are very low, for example,

and lower). Note that in recent times, the error rates of interest in applications are very low, for example,  ,

,  or

or  . Note that the union bounds calculated with this formula are accurate at low error rates and for many levels of quantization of the demodulator output value. There are applications that use so-called "hard decisions" at the demodulator output, which is a simple two-level quantization. In this case, the performance is degraded from that which we calculate in this Demonstration. The degradation is about 2 dB at moderately low error rates in the range of

. Note that the union bounds calculated with this formula are accurate at low error rates and for many levels of quantization of the demodulator output value. There are applications that use so-called "hard decisions" at the demodulator output, which is a simple two-level quantization. In this case, the performance is degraded from that which we calculate in this Demonstration. The degradation is about 2 dB at moderately low error rates in the range of  to

to  . In the absence of a more accurate calculation for a specific code, assume that for a hard decision union bound calculation, the

. In the absence of a more accurate calculation for a specific code, assume that for a hard decision union bound calculation, the  required for error rates in this range is about 2 dB higher than the calculation provided by this Demonstration. This degradation increases to about 3 dB at extremely low error rates. For 8-level or 16-level quantization, the

required for error rates in this range is about 2 dB higher than the calculation provided by this Demonstration. This degradation increases to about 3 dB at extremely low error rates. For 8-level or 16-level quantization, the  required for error rates in this range is a few tenths of a dB higher than the calculation provided by this Demonstration.

required for error rates in this range is a few tenths of a dB higher than the calculation provided by this Demonstration.

The weight structures for a number of the convolutional codes in this Demonstration are given in [3]. The weight structures for the block codes in this Demonstration as well as other block codes are given in [4]. The code generators for many of these codes are also given in [3] and in other publications.

This Demonstration has the following controls:

1. The "select code" drop-down menu lists the database of block and convolutional codes. Convolutional codes are indicated by code parameters as indicated in the discussion above. Block codes are indicated by the code parameters followed by the code name, such as " ". After selecting a code from this list, a performance curve is displayed using the default parameters for the other controls.

". After selecting a code from this list, a performance curve is displayed using the default parameters for the other controls.

2. After selecting a code from the drop-down list, you can set the lower limit with the " low" drop-down menu and the range with "

low" drop-down menu and the range with " range".

range".

3. Use the "maximum error probability" drop-down menu to select the upper limit on the plotted probability of error.

4. Use the "display decades" drop-down menu to select the number of decades of probability of error for the plot. For example, if "3." is selected, then the probability of error axis extends from a maximum of "maximum error probability" to a minimum of "maximum error probability/1000."

References

[1] R. G. Gallager, Principles of Digital Communication, New York: Cambridge University Press, 2008.

[2] A. J. Viterbi, "Error Bounds for Convolutional Codes and an Asymptotically Optimum Decoding Algorithm," IEEE Transactions on Information Theory, 13(2), 1967 pp. 260–269. doi:10.1109/TIT.1967.1054010.

[3] G. C. Clark and J. B. Cain, Error-Correction Coding for Digital Communications, New York: Plenum Press, 1981.

[4] "List of Weight Distributions." The On-Line Encyclopedia of Integer Sequences. (Nov 20, 2017) oeis.org/wiki/List_of_weight _distributions.

Permanent Citation