Comparing Ambiguous Inferences when Probabilities Are Imprecise

Requires a Wolfram Notebook System

Interact on desktop, mobile and cloud with the free Wolfram Player or other Wolfram Language products.

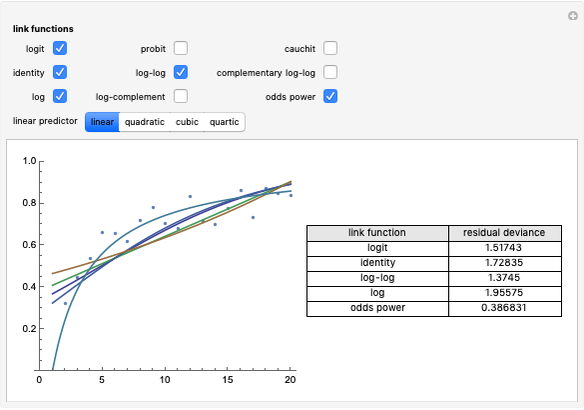

How do you interpret the result of the diagnostic test for the level of a state variable when some or all of the information underlying the inference is ambiguous (imprecise)?

Contributed by: John Fountain and Philip Gunby (March 2010)

Open content licensed under CC BY-NC-SA

Snapshots

Details

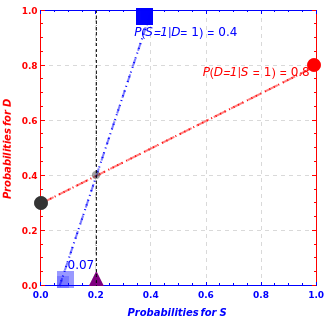

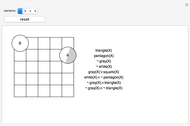

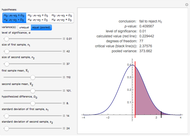

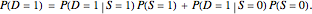

The dashed lines in the graph are coherency constraints on the inference process. The marginal probability for the diagnostic test result being positive,  , is a weighted average of the sensitivity,

, is a weighted average of the sensitivity,  , and the false positive rate,

, and the false positive rate,  , (1- specificity), as specified in the linear equation:

, (1- specificity), as specified in the linear equation:

This equation is represented by the dashed red line between the two circles on the right- and left-hand margins of the graphical interface.

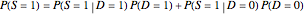

is the marginal or unconditional probability of the proposition that

is the marginal or unconditional probability of the proposition that  is true (

is true ( ). It too must be an appropriate weighted average of posterior inferences about the state given various levels of the diagnostic signal, as specified in the following linear equation:

). It too must be an appropriate weighted average of posterior inferences about the state given various levels of the diagnostic signal, as specified in the following linear equation:

.

The blue dotted/dashed line between the two squares on the top and bottom horizontal axes expresses this linear relationship.

.

The blue dotted/dashed line between the two squares on the top and bottom horizontal axes expresses this linear relationship.

The intersection of the red and blue dashed lines solves for the unique pair of marginal probabilities,  , that satisfies both linear relationships.

, that satisfies both linear relationships.

The above two linear relationships are not logically independent. Changing any of the three components of one of the linear relationships means the components of the other relationship change as well. The Demonstration is set up so that the sensitivity, specificity, and base rate that define the red dotted/dashed line between the two circles can be changed by the sliders, and the endpoints of the corresponding changes in the blue dotted/dashed line between the two squares trace out the relevant posterior inferences,  and

and  , with the emphasis on the former.

, with the emphasis on the former.

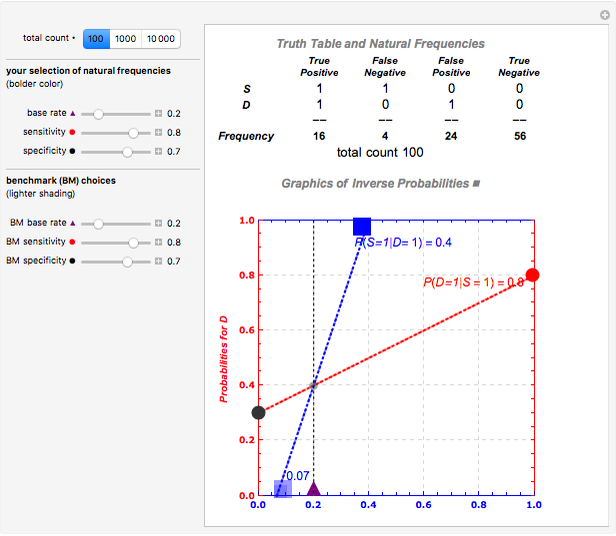

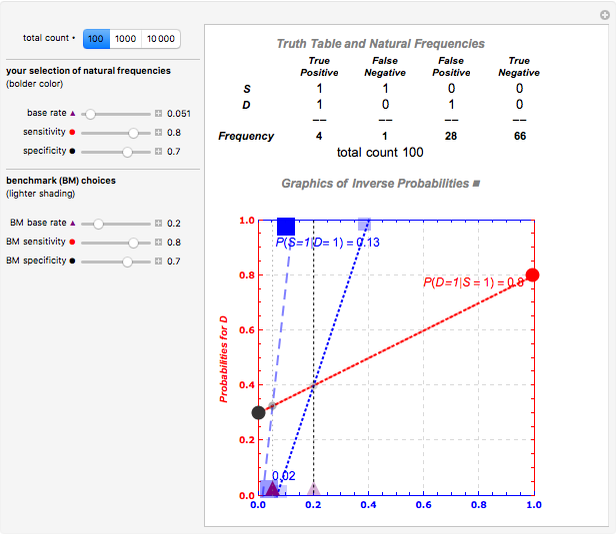

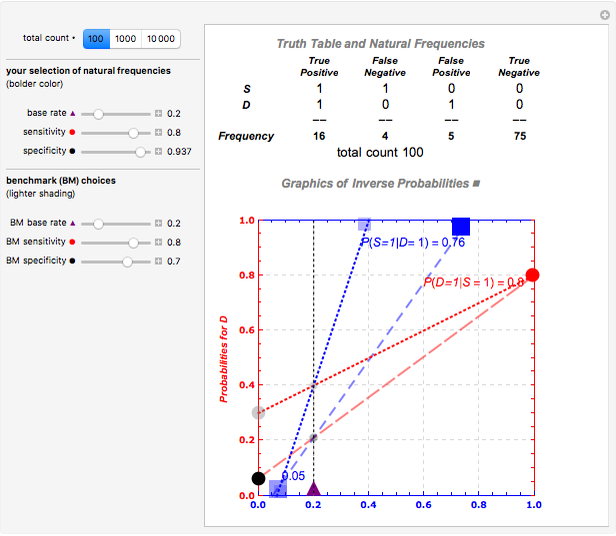

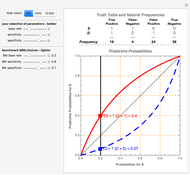

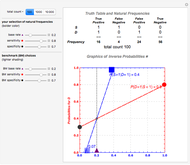

There are two sets of sliders, one for benchmark purposes, the other to examine the impacts of changes in the underlying sensitivity, specificity, and base rate information, either separately or jointly. For example, starting out with the initial values of sensitivity of 80%, specificity of 70%, and base rate of 20%, changing one or all of the top set of three sliders alters the posterior inferences—but leaves visible the reference specifications. Snapshot 1 shows the initial configuration and calculation of relevant inverse probabilities. Snapshot 2 shows the effect of varying only one parameter; here reducing the base rate reduces  significantly in the diagram and the truth table representation shows why—there are so many more false positives. Snapshot 3 shows the effect of varying another parameter; here increasing the specificity of the test increases

significantly in the diagram and the truth table representation shows why—there are so many more false positives. Snapshot 3 shows the effect of varying another parameter; here increasing the specificity of the test increases  significantly in the diagram and the truth table representation shows why—the number of false positives drops dramatically. Of course the reference specification itself can also be changed by changing the sliders in the lower box.

significantly in the diagram and the truth table representation shows why—the number of false positives drops dramatically. Of course the reference specification itself can also be changed by changing the sliders in the lower box.

A fuller explanation of the coherency relationships involved in Bayes's theorem is available in the Demonstration "Bayes Theorem and Inverse Probability".A more descriptive explanation complete with guided webcasts and references can be found at the UCTV site.

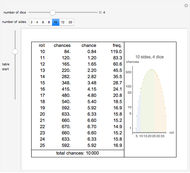

Snapshot 1: a basic starting point

Snapshot 2: changing the base rate

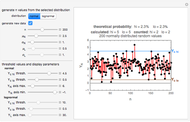

Snapshot 3: changing the specificity

Permanent Citation