Maximum Likelihood Estimators for Binary Outcomes

Requires a Wolfram Notebook System

Interact on desktop, mobile and cloud with the free Wolfram Player or other Wolfram Language products.

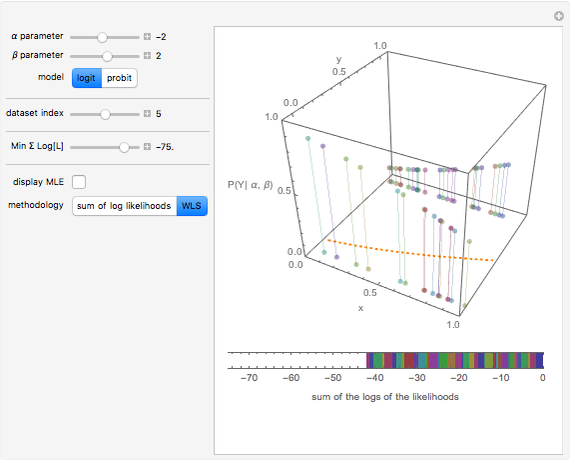

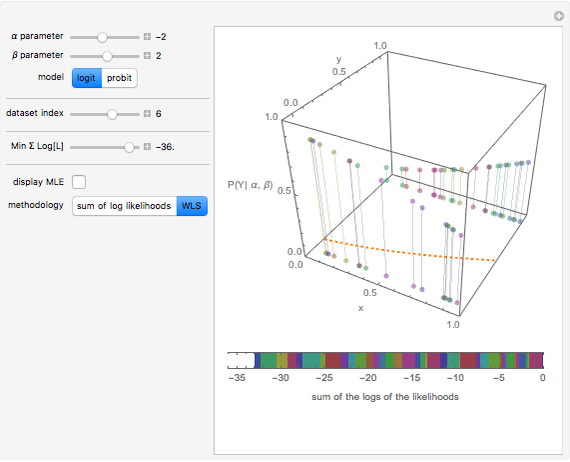

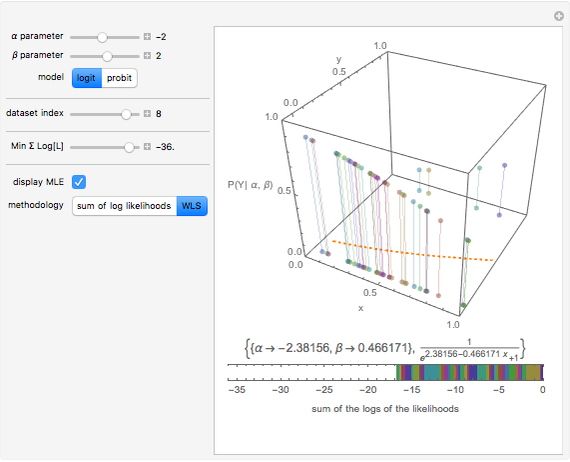

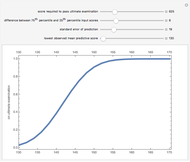

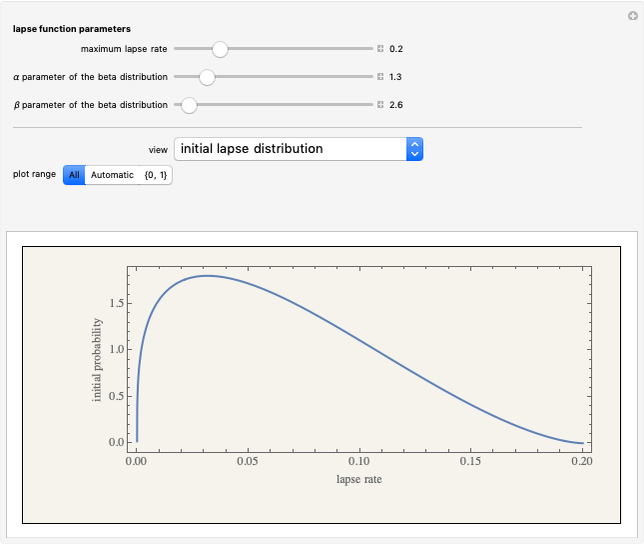

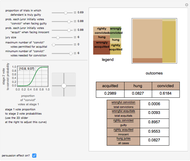

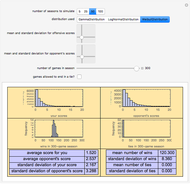

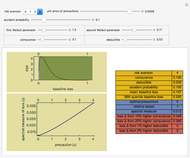

Often regressions involve binary outcome data: the object is to predict some event that will or will not occur based on various data. This Demonstration shows how to derive the maximum likelihood estimates of the coefficients in probit and logit regressions that are typically used to model data with a binary outcome. You can specify a dataset for examination, make guesses as to the  and

and  parameters, and choose a logit or probit regression model. The top panel shows the data, the current regression model (orange line) and the probability (likelihood) that each

parameters, and choose a logit or probit regression model. The top panel shows the data, the current regression model (orange line) and the probability (likelihood) that each  -value would occur for a given

-value would occur for a given  -value, given

-value, given  and

and  . The bottom panel shows the sum of the log of each of these likelihoods. Selection of maximum likelihood estimates of

. The bottom panel shows the sum of the log of each of these likelihoods. Selection of maximum likelihood estimates of  and

and  will make this sum as large as possible and the displayed rectangle as small as possible. You can specify the minimum displayed value of the sum of the log likelihoods. If you are curious, you can also request a computation of the correct answer for each dataset, which may be done either directly or using an iterative weighted least squares (WLS) process.

will make this sum as large as possible and the displayed rectangle as small as possible. You can specify the minimum displayed value of the sum of the log likelihoods. If you are curious, you can also request a computation of the correct answer for each dataset, which may be done either directly or using an iterative weighted least squares (WLS) process.

Contributed by: Seth J. Chandler (March 2011)

After work by: Darren Glosemeyer and J. Scott Long

Open content licensed under CC BY-NC-SA

Snapshots

Details

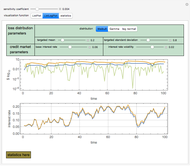

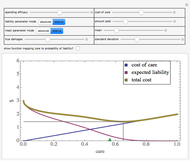

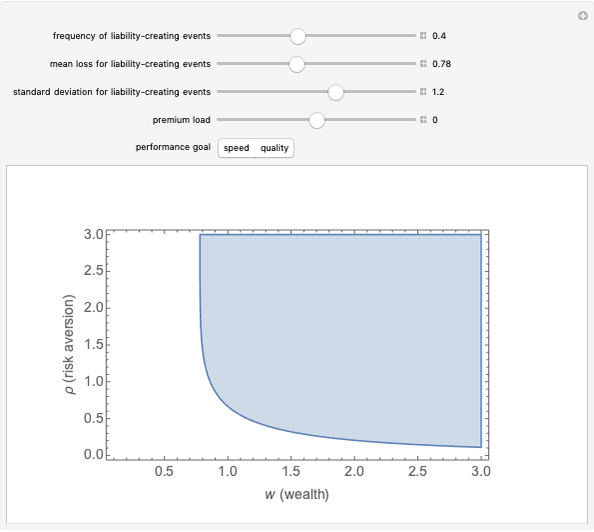

The Demonstration employs two alternative methodologies to compute the maximum likelihood estimators: The first method uses global optimization routines to find the values of  and

and  that maximize the sum of the logs of the likelihoods of each data point. The second method uses an iterative weighted least squares process which makes assumptions about weights, figures out what coefficients minimize the residuals, figures out what weights are consistent with the resulting model, and so on until convergence is reached. Consistent with predictions, the results of the two methodologies appear to be the same in all cases. On larger datasets, the weighted least squares method is expected to be faster owing in part to the intricacies of global optimization.

that maximize the sum of the logs of the likelihoods of each data point. The second method uses an iterative weighted least squares process which makes assumptions about weights, figures out what coefficients minimize the residuals, figures out what weights are consistent with the resulting model, and so on until convergence is reached. Consistent with predictions, the results of the two methodologies appear to be the same in all cases. On larger datasets, the weighted least squares method is expected to be faster owing in part to the intricacies of global optimization.

The optimization code used here can be readily extended to address more complex models.

The controls of this Demonstration will generally respond more swiftly if "compute MLE" is unchecked.

Permanent Citation